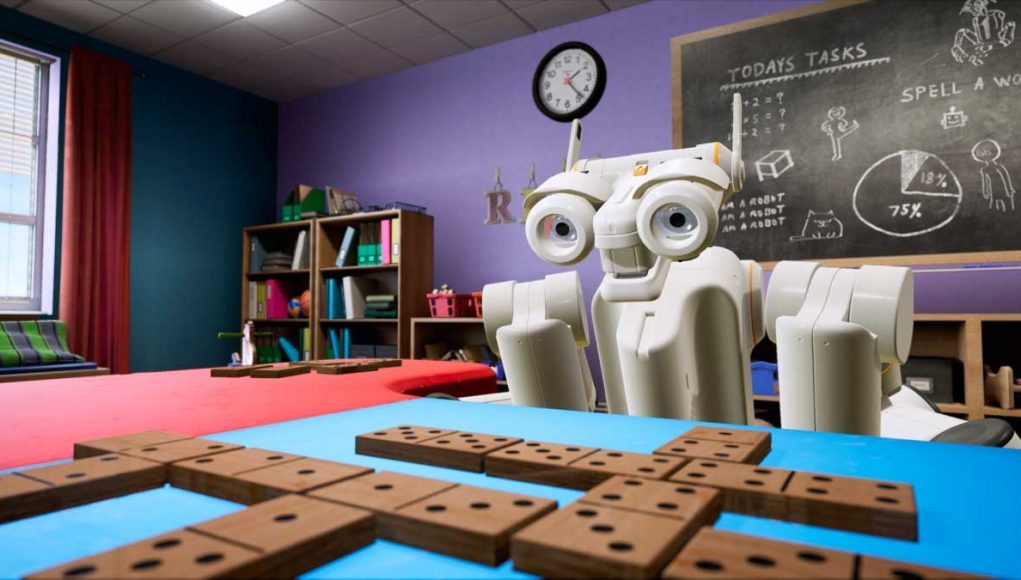

At SIGGRAPH 2017, NVIDIA was showing off their Isaac Robot that had been trained to play dominos within a virtual world environment of NVIDIA’s Project Holodeck. They’re using Unreal Engine to simulate interactions with people in VR to train a robot how to play dominos. They can use a unified code base of AI algorithms for deep reinforcement learning within VR, and then apply that same code base to drive a physical Baxter robot. This creates a safe context to train and debug the behavior of the robot within a virtual environment, but to also experiment with cultivating interactions with the robot that are friendly, exciting, and entertaining. This will allow humans to build trust in interacting with robots in a virtual environment so that they are more comfortable and familiar with interacting with physical robots in the real world.

LISTEN TO THE VOICES OF VR PODCAST

I talked with NVIDIA’s senior VR designer on this project, Omer Shapira, at the SIGGRAPH conference in August, where we talk about using Unreal Engine and Project Holodeck to train AI, using a variety of AI frameworks that can use VR as a reality simulator, stress testing for edge cases and anomalous behaviors in a safe environment, and how they’re cultivating social awareness and robot behaviors that improve human-computer interactions.

Here’s NVIDIA CEO Jensen Huang talking about using VR to train robots & AI:

If you’re interested in learning more about AI, then be sure to check out the Voices of AI podcast which just released the first five episodes.

Support Voices of VR

- Subscribe on iTunes

- Donate to the Voices of VR Podcast Patreon

Music: Fatality & Summer Trip